LLM observability, why production AI dies without it

What observability means for LLM-based systems, the specific signals to monitor, and why teams that skip observability ship AI products that silently degrade.

Why LLM systems fail silently

Traditional software fails loudly. An exception throws, a 500 returns, a log shows a stack trace. You know something's wrong quickly.

LLM systems fail quietly. The model returns plausible-looking but wrong output. Retrieval misses relevant context. A prompt injection slips through. The user gets a wrong answer and either doesn't notice or complains days later when enough wrong answers have accumulated. By then the data is dirty, trust is eroded, and debugging requires archeology.

This is why LLM observability matters more than traditional observability, the failure modes are subtler and the cost of delayed detection is higher.

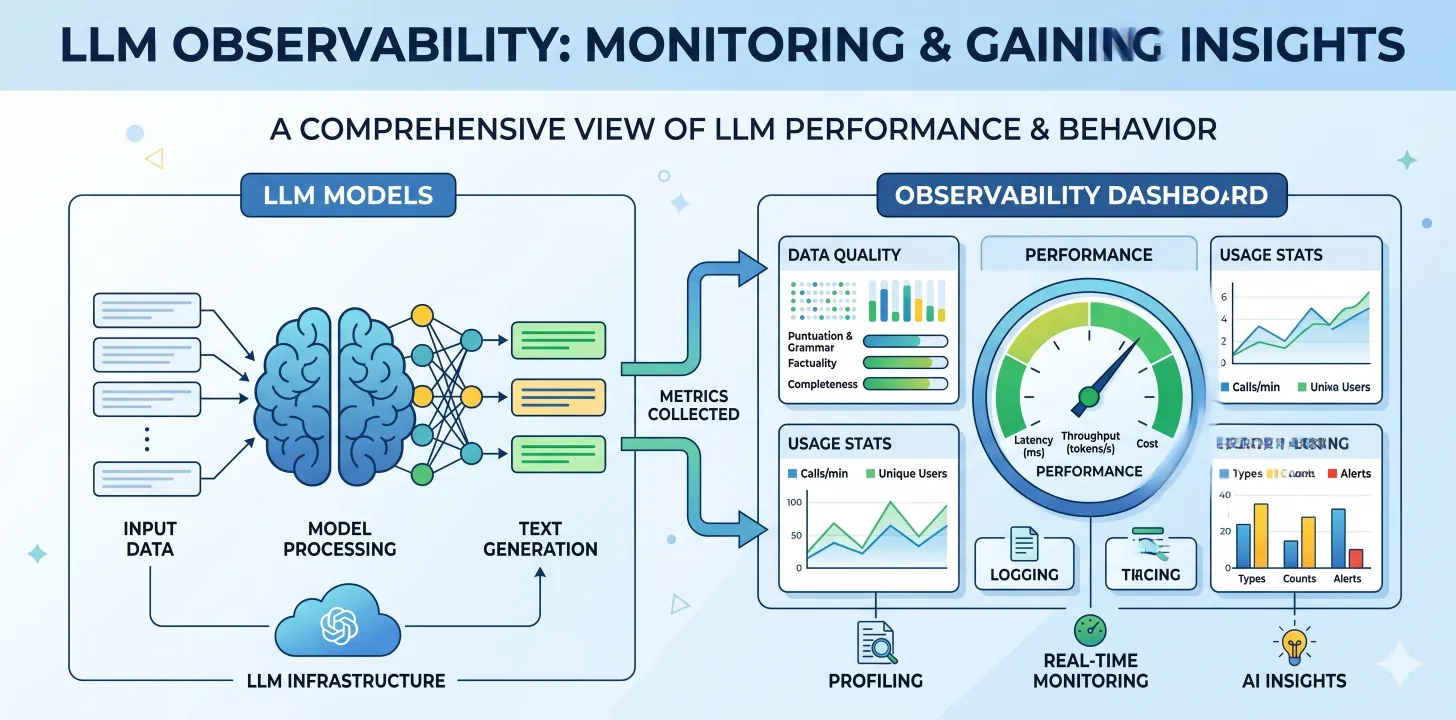

What to log for every LLM interaction

Every LLM call in production should log:

- Input: the user query or triggering event, before prompt templating

- Prompt: the full prompt sent to the model, including system prompt + retrieved context + user query

- Retrieval context: what was retrieved, from where, with what relevance scores

- Model metadata: provider, model name, model version, temperature, max tokens

- Tool calls: if the model invoked functions/tools, what were they and what did they return

- Output: the full model response

- Token usage: input and output tokens (for cost and latency analysis)

- Latency: end-to-end and per stage (retrieval, LLM, tool calls)

- Cost: computed from token usage and pricing

- Session/user context: trace ID, user ID, session ID for correlation

This data answers the questions that come up during debugging:

- Why did the agent say X?

- What context was it using?

- Did we change anything recently that affected quality?

- Is latency or cost drifting?

What to monitor continuously

Beyond logging, specific metrics need continuous monitoring:

Accuracy (golden set regression)

Maintain a golden set of inputs with expected outputs. Run it on every deployment, then daily in production. Alert on regression greater than a threshold (e.g., 5% drop in accuracy).

Retrieval recall

For retrieval-grounded agents, monitor whether expected documents are being retrieved for queries where ground truth exists. Drops in retrieval recall usually precede accuracy drops.

Hallucination rate

Measured by asking the agent questions where you know the answer and checking if the response is grounded in source material or fabricated. Can be automated with a judge LLM for scale.

Latency tails

P50, P95, P99 latency. LLM latency is often bimodal, most requests fast, some slow. Track the tails.

Cost per interaction

Total cost / total interactions over rolling windows. Alert on significant increases (often indicates prompt inflation or retrieval bloat).

Escalation rate (if human-in-the-loop)

Rate at which agent decisions are overridden by humans. Increasing escalation often signals quality degradation.

Error rate

LLM API errors, retrieval errors, tool-call errors. Each tracked separately because the remediation differs.

Tools that work

Several mature tools handle LLM observability:

- Langfuse, open-source, self-hostable, strong on tracing. Good default for teams that want ownership.

- Helicone, proxy-based, very easy setup, good analytics UI.

- LangSmith, tightly coupled with LangChain. Good if you're already on LangChain.

- OpenTelemetry + custom exporters, if you have existing OTEL infrastructure, extending it for LLM observability makes sense.

- Arize / WhyLabs, more enterprise, focused on ML observability including LLMs.

We default to Langfuse for most client engagements. It's open-source, self-hostable (important for data residency), and covers 90% of what teams need without the enterprise overhead.

Observability drives improvement

With good observability, improvement cycles become routine:

- Monitor catches a quality drop on Monday

- Engineering reviews traces, finds that retrieval is returning fewer relevant chunks for certain queries

- Reranking parameters are tuned in staging

- A/B test against production shows improvement

- Rollout, with observability catching any regression

Without observability, this cycle doesn't happen. Quality degrades; nobody notices for weeks; by then debugging is archaeology rather than engineering.

Common mistakes

1. Logging only inputs and outputs. Missing retrieval, tool calls, and metadata makes debugging impossible.

2. Not sampling at scale. At high volume, log 100% of inputs/outputs but sample detailed traces (e.g., 10%). Cost and storage become issues without sampling.

3. No PII handling. LLM inputs often contain PII. Logging must respect privacy, redact, tokenize, or exclude PII before it hits observability storage.

4. No production/staging separation. Staging and production observability should be separate to avoid polluting metrics with test data.

5. Alert fatigue. Too many alerts → ignored alerts. Tune thresholds and route alerts to the right people.

Conclusion

LLM observability is the difference between production AI that improves over time and production AI that silently degrades. It's boring infrastructure work that pays back enormously. If you're shipping anything AI-powered to production, invest in observability on day one.

If you're running production AI without observability and want to fix that, talk to us.

Related reading: Ten agentic AI deployments · Real cost of enterprise AI · Build vs buy for AI agents