Customer support

Tier-1 agents resolving 40-60% of tickets.

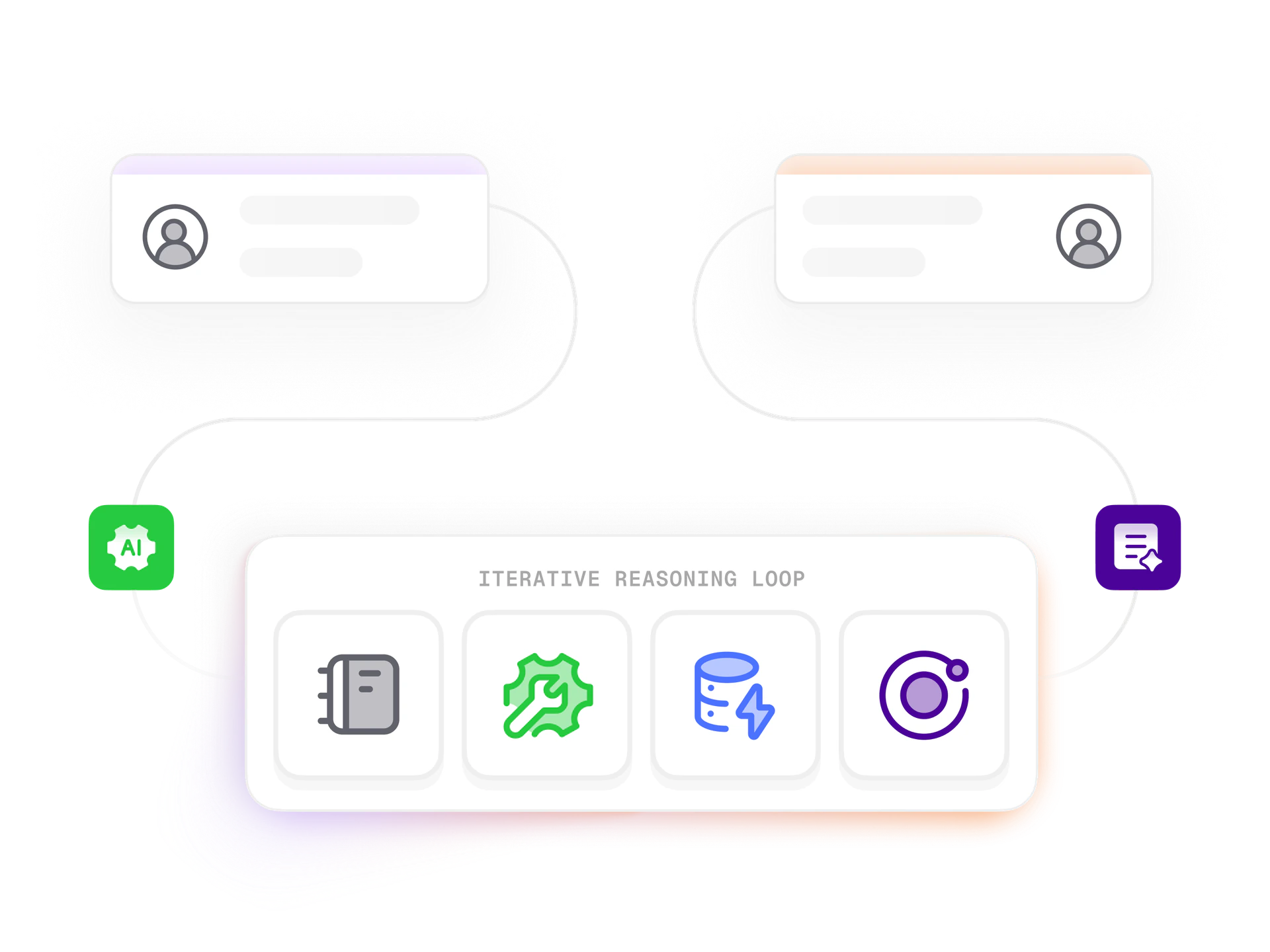

Agents grounded in your data, governed by your rules, observable end-to-end. They close tickets, reconcile invoices, and handle procurement, with full audit trails.

Most enterprise AI pilots don't reach production. Demos look incredible, then reality hallucinates a refund policy that doesn't exist or fails compliance review. Production AI is 20% prompt engineering, 80% surrounding infrastructure.

We ship agents that work, hybrid retrieval, eval harnesses, CI guardrails with adversarial tests, full decision logs, human-in-the-loop on high-stakes. Where it pays off, support (40-60% tier-1), invoices (85%+ touchless), procurement, document review, HR helpdesk.

Tier-1 agents resolving 40-60% of tickets.

OCR + LLM 3-way match. 85% touchless.

Vendor negotiation within policy, outliers flagged.

Contract, lease, compliance, source-cited.

Policy-aware leave, benefits, expense questions.

Decisions logged, sources cited, escalations tested.

Each layer is where a different class of failure hides. Skip one and find out at scale.

Hybrid vector + keyword search, careful chunking, cross-encoder reranking, eval harness for precision and recall.

Prompts are versioned artifacts. Guardrails enforced as CI policy tests, hundreds of adversarial cases per deploy.

Every decision logs inputs, tools, sources, model and prompt versions, rationale. Replayable by compliance and ops.

Agents compose, humans commit. Customer promises, financial commitments, legal outputs route through review.

Gateway-routed (Vercel AI Gateway, OpenRouter, self-hosted), model swaps are config, not code.

Shadow control for 4-8 weeks measuring resolution, accuracy, CSAT, cost. Scale only if numbers are real.

B2B SaaS support agent, 4.6/5 CSAT against 12-week blind control.

Mid-market distributor, 13% edge cases route to human.

Services-firm legal team, lawyer verifies flags and references.

30-min feasibility call with a senior AI engineer. Use case assessed, build mapped, fixed-fee pilot scope, observability and audit trails included.

Book an AI feasibility call