GEO optimization, how to get cited by ChatGPT, Claude, and Perplexity

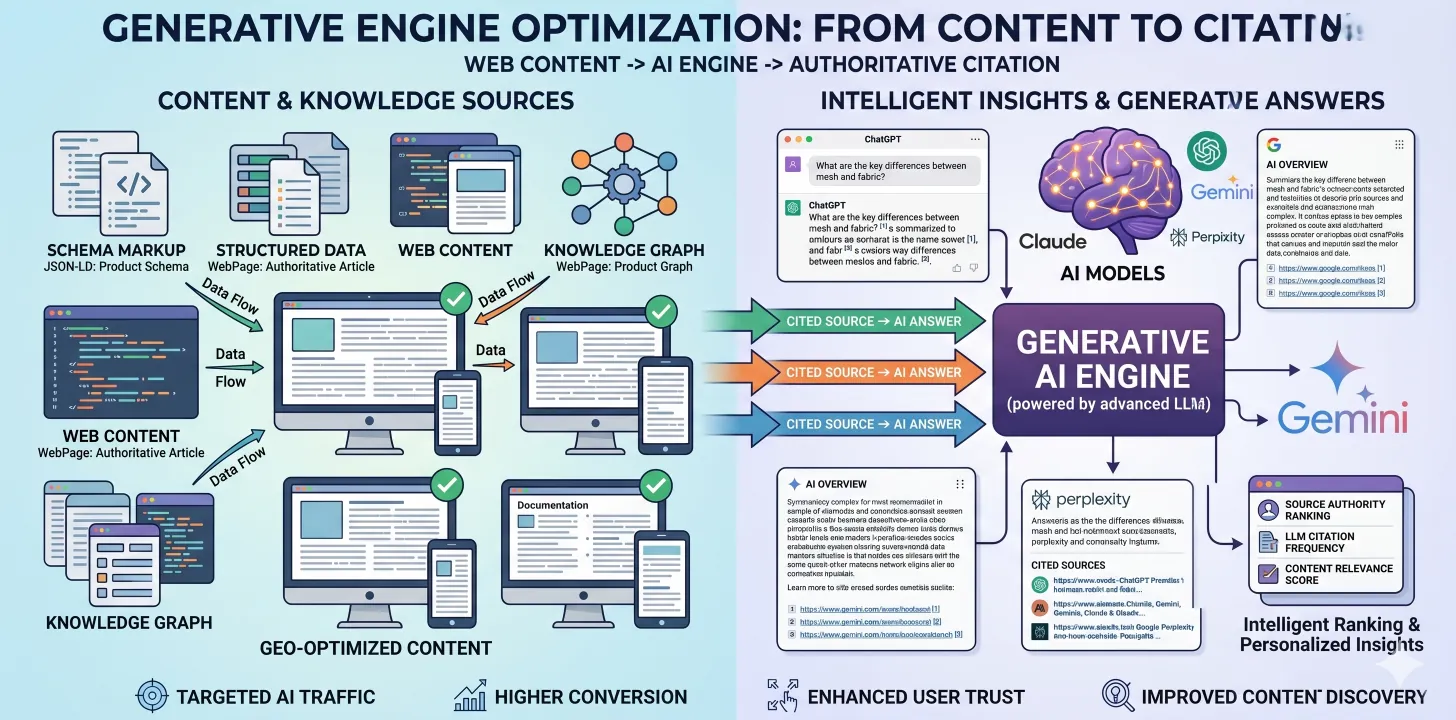

Generative Engine Optimization is the practice of structuring web content for citation by AI search engines. Specific techniques, schema markup, and measurement.

Why traditional SEO is evolving

For two decades SEO optimized for a specific thing: showing up in Google's 10 blue links. That paradigm is shifting. A meaningful and growing fraction of searches now happen inside AI interfaces, ChatGPT, Claude, Perplexity, Gemini, Bing Copilot, and AI-powered features in Google itself. These interfaces don't return 10 blue links. They return synthesized answers with citations.

Getting cited matters. Being the source an AI engine quotes when answering a user's question is the new version of ranking on page 1 of Google. Content and technical patterns that work for traditional SEO overlap with but don't fully cover what works for AI search.

This is called Generative Engine Optimization, GEO. Here's what we've learned about it from optimizing client sites across the past 18 months.

What AI engines reward

AI engines are selective about what they cite. From observed patterns and crawler behavior analysis:

Specific, verifiable claims

AI engines prefer citing content with concrete claims they can verify. "Our Odoo implementations typically take 12-20 weeks and cost $40-150K" is citable. "Fast implementations at competitive pricing" isn't.

Comprehensive coverage of topics

Shallow listicles don't get cited, AI engines prefer in-depth content that covers a topic thoroughly. 2,000-4,000 word posts outperform 500-word ones.

Structured data

Schema.org JSON-LD markup makes content machine-readable. AI engines use structured data to understand entity types, relationships, and context.

Source authority signals

Author information, organizational entity data, linking patterns, and citations to other credible sources all contribute to perceived authority.

Freshness and updates

Content with recent publish or update dates gets preferred, especially for time-sensitive topics (pricing, product features, regulations).

Technical GEO patterns that work

1. Schema.org JSON-LD everywhere

At minimum, every page should have:

- Organization schema for your company (once, on every page)

- WebSite schema

- Breadcrumb schema for navigation context

Page-specific schemas:

- Service pages: Service schema with description, area served, provider

- Blog posts: Article schema with author, date published, date modified

- FAQ pages: FAQPage schema with Q&A pairs

- How-to content: HowTo schema with steps

- Product/pricing: Offer, PriceSpecification schemas

- Reviews/testimonials: Review, Rating schemas

AI engines consume this structured data heavily. Invest in getting it right.

2. Clean semantic HTML

Modern LLMs parse HTML reasonably well, but clean semantic markup improves comprehension:

- Proper heading hierarchy (h1 → h2 → h3, no skips)

- Meaningful tag usage (

article,section,nav,aside,figure) - Meaningful alt text on images

- Proper link anchor text (not "click here")

3. llms.txt and AI crawler configuration

The emerging llms.txt standard at your site root describes what content you want AI to cite. Not universally adopted yet but gaining traction with major engines.

robots.txt should explicitly allow the AI crawlers you want to index you:

GPTBot(OpenAI)ChatGPT-User(OpenAI real-time)ClaudeBot,Claude-Web(Anthropic)PerplexityBotGoogle-Extended(separate from Googlebot; controls training data use)Applebot-Extended

4. Answer-format content

Content structured to directly answer questions gets cited more than rambling content. Good patterns:

- Heading matches a user question

- First paragraph provides a direct answer

- Subsequent paragraphs provide supporting detail

- Claims are specific and verifiable

This mirrors the "featured snippet" pattern that worked for Google but matters even more for AI search.

5. Internal linking and entity context

AI engines build entity graphs from your content. Consistent internal linking helps:

- Link to related content with descriptive anchor text

- Mention entities (tools, companies, people, places) consistently

- Use proper capitalization and formatting for proper nouns

6. Updated content signals

AI engines favor fresh content. For evergreen topics, periodically update posts with a dateModified in the Article schema. Note updates in the post itself ("Updated January 2026 with Q4 2025 data").

Content patterns that work

Comparison content

AI engines get asked "X vs Y" questions constantly. In-depth, honest comparisons get cited. Include specific dimensions, real numbers, and honest trade-offs.

Cost and pricing content

AI engines get asked "how much does X cost" frequently. Content with real ranges and context gets cited heavily.

Methodology and process content

"How do you do X" queries are common. Documented methodologies with specific steps and rationale get cited.

Case studies with numbers

Concrete outcomes with metrics beat generic success stories. "Reduced close cycle from 14 to 5 days" is more citable than "transformed our close process."

FAQ content with real answers

Real FAQ content (not marketing-speak) gets cited heavily. Use FAQPage schema.

What doesn't work

- Thin content (under 500 words) rarely gets cited

- Marketing-speak without substance gets passed over

- Lists without depth (listicles without explanation) don't perform

- Pure opinion without data doesn't carry authority

- Stale content (not updated in 2+ years) gets deprioritized for time-sensitive topics

Measuring GEO success

AI crawler traffic in server logs

Search for GPTBot, ClaudeBot, PerplexityBot, Google-Extended in server logs. Trending volume indicates AI engines finding your content.

Branded query testing

Periodically test your brand and topics in ChatGPT, Claude, Perplexity, Gemini. See if you're cited, what specific content is cited, and how you're characterized.

Referral traffic from AI interfaces

Some AI tools pass referral headers when users click through. Not universal but growing.

Structured data validation

Google's Rich Results Test, Schema.org validator. Ensure your schema is parseable.

Citation tracking tools

Emerging tools like Otterly.ai, Peec.ai, and similar track when your brand is cited by AI engines. Still early but useful.

Common mistakes

- Schema without content to match, don't stuff schema. If you claim FAQPage, the page should actually have FAQs. Mismatch hurts credibility.

- Blocking AI crawlers by default, some robots.txt disallow bots out of caution, losing citation opportunities. Be intentional.

- Over-optimizing for keywords, AI engines understand semantic meaning. Stuffed keyword content performs worse than natural substantive writing.

- Ignoring entity consistency, referring to your company inconsistently across pages weakens entity recognition.

- No updates cadence, content that ages without updates loses priority.

Conclusion

GEO isn't a fundamentally different practice from SEO, it's SEO with emphasis on citability, structured data, and depth over listicle brevity. For B2B tech businesses, AI search is already a meaningful traffic source and growing. The content and technical patterns above compound over time.

If you want a GEO audit of your site, talk to us.

Related reading: Digital marketing service page · Real cost of Odoo implementation